I currently own a Nvidia GTX 960 card and I’m in the market for a new graphics card*. In a perfect world I could just add 4GB of RAM and some quieter fans to my existing card, which would set me up for a few more years, but this just isn’t possible. So that got me thinking, why aren’t graphics cards modular?

I’m sure computer hardware enthusiasts could chime in and tell me the many reasons why graphics cards are the way they are, but to me a graphics card is just a small computer. It has its own case, processor, RAM, and cooling system. I believe a graphics card should be as customizable as a desktop computer.

Look at mini PCs these days (many comparable in size to a graphics card, if not smaller), they can be opened up and modded easily. I would also wager that most people who are familiar with graphics cards are also capable of building/customizing computers, so building a custom card should be a familiar endeavor.

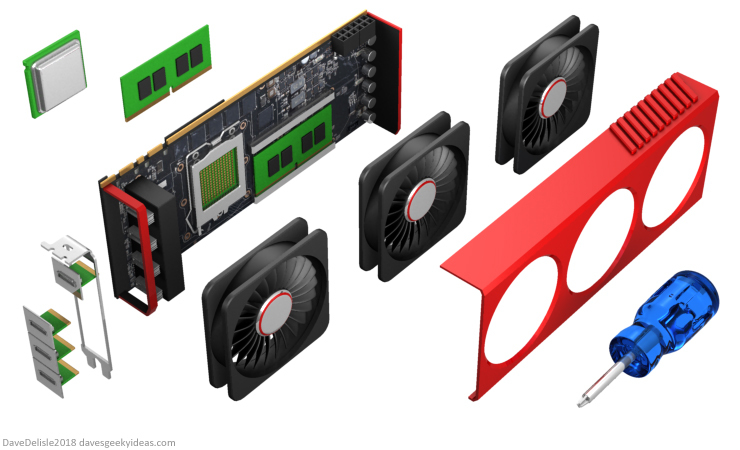

A modular card would feature slots for RAM sticks, a graphics processor that is mounted like a desktop CPU, custom display ports, and your choice of cooling system. All these features, along with cosmetic options like RGB lighting and unique case/shroud designs, create endless possibilities for graphics cards.

These modular cards would also be easier to repair and upgrade as needed. Perhaps this is why graphics cards are not modular? Just a hunch.

*Why are graphics cards so expensive these days? Are they made of Gold-Pressed Latinum??

Sounds actually like a great idea! Not for the graphics card sellers though. But if it is technologically possible it could be great

USB Type-C includes support for alternate modes, which includes HDMI, DisplayPort, and Mobile High-Definition Link (MHL) and HDMI includes backwards-compatibility with DVI-D (digital interface only). I don’t think it would be too difficult to implement an edge connector interface that is essentially USB Type-C with a different connector that display port connectors can plug into. There may need to be some extra connections for VGA signals (which can also be used for DVI-A, or combined using a passive circuit to generate component or composite video).

remember in the 90s you could add memory to your graphics card?